Many products are available in netCDF format. Unfortunately, the files do not conform to the COARDS or CF conventions commonly used in climate research. For example, the units associated with the latitude (lat) and longitude (lon) variables are both "degrees" rather than the udunits conforming "degrees_north" and "degrees_east". More problematical is the designation of the variable 'time' which has units relative to midnight of the currrent day (rather than some base date) as UNLIMITED. For example:

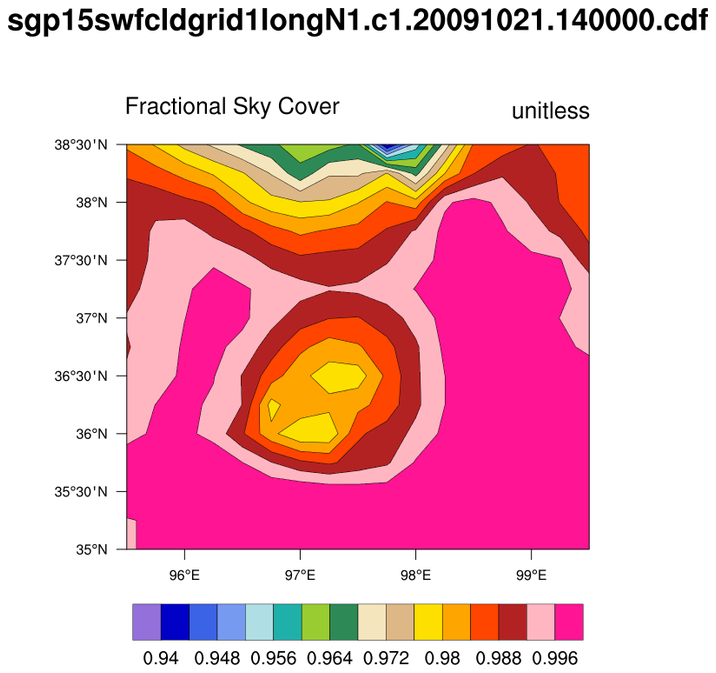

netcdf sgp15swfcldgrid1longN1.c1.20091021.140000 {

dimensions:

time = UNLIMITED ; // (35 currently)

lat = 15 ;

lon = 17 ;

variables:

int base_time ;

base_time:string = "21-Oct-2009,14:00:00 GMT" ;

base_time:long_name = "Base time in Epoch" ;

base_time:units = "seconds since 1970-1-1 0:00:00 0:00" ;

double time_offset(time) ;

time_offset:long_name = "Time offset from base_time" ;

time_offset:units = "seconds since 2009-10-21 14:00:00 0:00" ;

double time(time) ;

time:long_name = "Time offset from midnight" ;

time:units = "seconds since 2009-10-21 00:00:00 0:00" ;

float cloudfraction(time, lat, lon) ;

[SNIP]

Typically, climate involves statistics over some time interval. It is common

practice to span many files to create (say) time series, climatologies or,

more sophisticated diagnostics. Being able to select data for specific

time periods can be essential.

NCL could do this by using addfiles and then, explicitly creating the appropriate time variable via cd_calendar and cd_inv_calendar . A more efficient approach would be to use the netCDF Operators (NCO). The ncrcat operator has been programmed to recognize ARM files. The ncrcat operator will recalculate the 'time_offset' variable to be relative to the 'base_time' in the first file to produce a monotonically increasing 'time_offset' value.

If ARM.1997-2008.nc is the file created by ncrcat, then in NCL:

f = addfile ("ARM.1997-2008.nc", "r") ; created by ncrcat

x = f->cloudfraction ; (time, lat, lon)

xqc = f->qc_cloudfraction ; packed integer; 0 means good

timeo = f->time_offset ; Time offset from base_time {ncrcat]

x&time = timeo ; overwrite original 'time'

xqc&time = timeo ; with time_offset variable